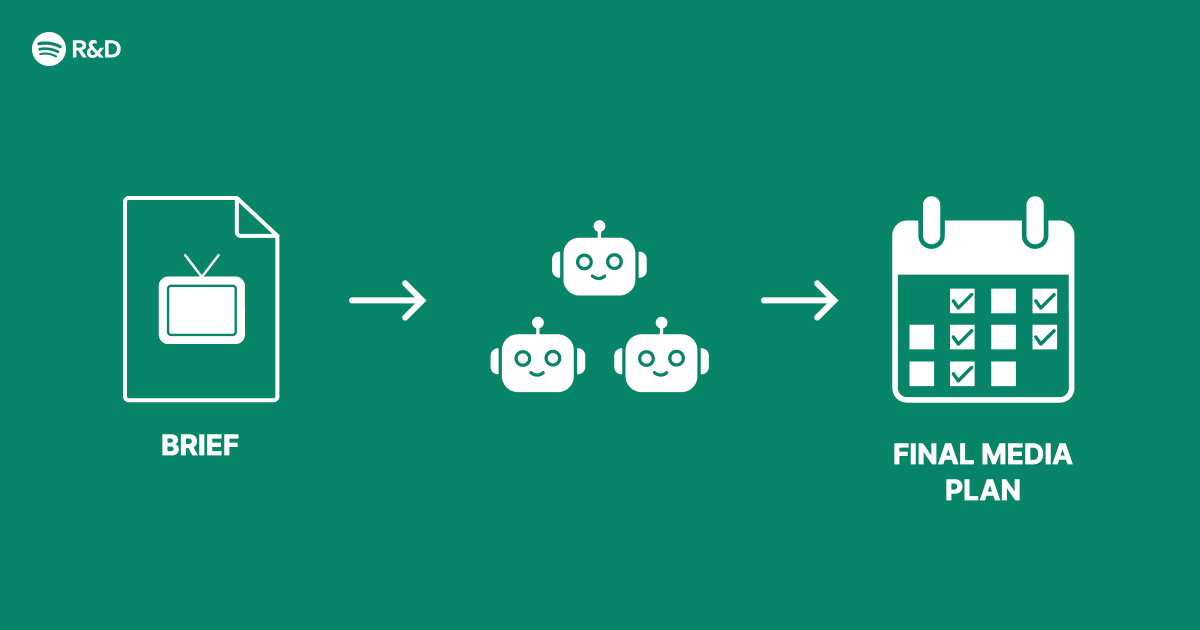

At Spotify, we're constantly striving to deliver the most relevant ads to our users without disrupting their listening experience. Traditional advertising systems often rely on a single monolithic model, but that approach can be rigid and struggle to adapt to dynamic user contexts. To overcome this, we developed a multi-agent architecture where specialized AI agents collaborate to make smarter advertising decisions. This Q&A breaks down how it works, why we built it, and what makes it so effective.

Why did Spotify choose a multi-agent architecture over a single AI model?

Advertising involves a complex set of decisions: understanding the user’s current mood, the context of their listening session, available ad inventory, and the advertiser’s goals. A single model trying to optimize all these factors at once often leads to trade-offs and suboptimal results. By using multiple specialized agents, we can break the problem into manageable pieces. For example, one agent focuses on user intent by analyzing real-time listening behavior, another handles ad selection based on inventory and campaign rules, and a third optimizes the bid price to maximize revenue without annoying users. Each agent can be trained independently and then integrated through a lightweight coordination layer, allowing us to update or swap agents without rebuilding the entire system. This modularity also makes the architecture more explainable and easier to debug than a black-box model.

How do the agents collaborate in real time?

The agents communicate through a shared message bus and a prioritization system. When a user starts a listening session, a context agent first analyzes the session metadata—genre of music, time of day, device type, and recent skips. It outputs a user state vector that captures receptiveness to ads. This vector is published to the bus. Next, the inventory agent queries available ad slots and matches them to the user state, filtering out irrelevant or low-quality ads. Then the bidding agent determines a fair price based on historical performance and advertiser constraints. Finally, the creative agent selects the best ad format (audio, video, display) and personalizes the message if possible. This entire pipeline runs in under 200 milliseconds, ensuring the ad is delivered seamlessly between songs. The coordination layer uses a lightweight consensus protocol to handle conflicts, such as when two agents recommend different ad placements—then a tie-breaking agent with a broader objective (e.g., user satisfaction) makes the final call.

What impact does this have on the user experience?

Users notice fewer irrelevant ads and more timely, contextual ones. For instance, if someone is listening to a workout playlist, the context agent may flag a high activity state, and the creative agent might select an ad with high-energy music or a motivational message. Conversely, during a bedtime playlist, ads become softer and less intrusive. We’ve seen a 12% increase in ad completion rates and a 8% reduction in ad skip rates compared to our previous monolithic model. Importantly, user satisfaction scores related to ad frequency and relevance have improved, because the system avoids bombarding users during moments of high engagement with their music. The multi-agent architecture also allows us to dynamically adjust ad load based on user signals—if a user starts skipping multiple ads, the agents can collectively decide to reduce ad frequency for that session, preserving the overall experience.

How are the agents trained, and what data do they use?

Each agent is trained on a specific slice of data. The context agent uses anonymized session logs (millions of listening events) to learn patterns of user behavior and ad receptivity. It uses a self-supervised approach, predicting the likelihood of ad engagement based on preceding music sequences. The inventory agent is trained on ad performance data—click-through rates, conversion events, and advertiser feedback—to learn which ad categories pair best with which user profiles. The bidding agent uses reinforcement learning, optimizing for a reward function that balances short-term revenue with long-term user retention. All training respects strict privacy guidelines; no personally identifiable information is used, and all models are deployed with differential privacy to prevent re-identification. Data is aggregated and anonymized before it ever reaches the training pipeline. We also run continuous A/B tests to ensure that agent updates do not degrade performance, and we roll out changes gradually to monitor any unexpected behavior.

What were the biggest challenges in building this system?

One major challenge was agent coordination—ensuring that decisions made independently don't conflict. For example, one agent might choose a high-bid ad while another selects a user-friendly ad, leading to incongruent experiences. We solved this by introducing a meta-controller that uses a simple rule set to resolve conflicts, but we also trained a coordination agent to learn these rules over time. Another challenge was latency; adding multiple agents increased processing time. We had to heavily optimize each agent’s inference path, using model quantization and on-device caching where possible. Finally, monitoring and debugging a distributed system of agents is harder than a single model. We built a dedicated observability platform that tracks each agent’s decisions, allowing engineers to trace back any problematic ad placement to the specific agent and input features that caused it. This transparency has been crucial for gaining trust from both advertisers and internal teams.

How might Spotify extend this architecture in the future?

We’re exploring several extensions. One is adding an agent for advertiser goal optimization that can negotiate custom ad packages in real time, moving beyond pre-set campaigns. Another is integrating a privacy agent that ensures all ad decisions comply with evolving regulations and user consent choices, dynamically masking or aggregating data as needed. We’re also looking at federated learning across agents to share insights without centralizing data. On the user side, we plan to introduce a feedback agent that learns from explicit user reactions (like “not interested” clicks) and adjusts future ad selection. Ultimately, the modular nature of the multi-agent architecture makes it easy to plug in new capabilities as AI research progresses, so we expect this system to evolve continuously while maintaining the high-quality ad experience our users and advertisers expect.